AI Video Weekly Roundup — February 28, 2026

Welcome to RCTV’s first AI Video Weekly Roundup — your concise briefing on the most important developments at the intersection of artificial intelligence, video production, and digital media. Every week we cut through the noise to bring you the stories that matter.

🎬 Seedance 2.0 Drops — And Hollywood Is Nervous

The biggest story of the month: ByteDance released Seedance 2.0, and the AI video world hasn’t been the same since. The model generates cinematic-quality video from text prompts with native audio, accurate lip-sync, and multi-layered environments in a single pass. Videos of Tom Cruise and Brad Pitt in fabricated fight scenes went viral within hours, prompting cease-and-desist letters from Disney and Paramount.

What makes Seedance 2.0 different isn’t just quality — it’s the unified multimodal engine that generates video, audio, and lip-sync together, eliminating heavy post-production workflows. The model also offers motion editing controls that put it closer to a real production tool than a novelty generator.

Deadpool writer Rhett Reese captured the mood: the line between AI-generated and live-action footage is getting dangerously thin.

Why it matters: China is now matching or exceeding US AI video capabilities in several dimensions. The competitive pressure will accelerate the entire field. Meanwhile, the deepfake implications have regulators scrambling — ByteDance has already had to roll back a voice-cloning feature and add identity verification for avatar creation.

🔧 Google Flow Gets a Major Redesign

Google quietly made one of the most significant moves of the month by consolidating its AI creative tools into a unified workspace. Flow — originally a video generation tool — now incorporates the capabilities of Whisk (image remixing) and ImageFX (image generation) into a single interface.

Key new features include:

- Extend: Generate what happens next in a clip

- Inpaint: Add or remove elements from scenes using text prompts

- Camera Control: Direct shots with pans, zooms, and custom movements

- Nano Banana integration: Create high-fidelity images and use them directly as video generation inputs

Google reports that Flow users have created over 1.5 billion images and videos since launch. Starting in March, users can migrate their Whisk and ImageFX projects directly into Flow.

Why it matters: Google is betting that the future of AI creative tools isn’t standalone generators — it’s integrated workflows. Flow is becoming a full creative suite where you go from concept to finished video without leaving the platform.

📊 The State of AI Video: February 2026 Snapshot

A comprehensive analysis from Cliprise lays out where the major models stand this month:

- Kling 3.0 (Kuaishou): The most feature-dense model available. Native 4K at 60fps, built-in audio, and a storyboard feature that generates up to six camera cuts in a single generation with visual consistency. This is the first AI model to meet broadcast delivery standards without upscaling.

- Sora 2 (OpenAI): Now powering real ad campaigns. Strong on narrative coherence and prompt fidelity.

- Veo 3.1 (Google): Pushing photorealistic rendering to the point where trained observers struggle to identify generated footage in blind tests.

- Seedance 1.5 Pro / 2.0 (ByteDance): Best-in-class for character consistency and cinematic motion.

- Runway Gen-4 Turbo: Leading in stylized and abstract content — VFX-oriented aesthetics and non-photorealistic work.

- LTX-2 (Lightricks): The standout for local/desktop generation — 20 seconds of 4K video with audio, running on consumer GPUs.

The key takeaway: the question is no longer “which model is best” — it’s which model is best for each specific shot. Multi-model workflows are becoming the professional standard.

🖥️ NVIDIA Brings 4K AI Video to Your Desktop

At CES 2026, NVIDIA announced a wave of upgrades that make local AI video generation genuinely viable for creators. The headline: a new pipeline using LTX-2 and ComfyUI that generates 4K AI video locally on RTX GPUs — no cloud dependency required.

The numbers are compelling: 3x faster performance, 60% less VRAM usage for video generation through PyTorch optimizations, and native support for NVIDIA’s new NVFP4/NVFP8 data formats. NVIDIA also introduced DGX Spark playbooks for those who want to run more serious AI workflows on dedicated hardware.

Why it matters: Cloud-based AI video generation has latency, cost, and privacy trade-offs. Local generation on consumer hardware — especially at 4K with built-in audio — changes the economics of AI content creation entirely. This is particularly relevant for independent creators and small studios.

⚖️ The Deepfake Regulation Wave Intensifies

The regulatory landscape around AI-generated video is shifting fast:

- DEFIANCE Act: Passed unanimously by the U.S. Senate in January 2026, this bill creates a federal right of action for victims of non-consensual deepfakes, with statutory damages up to $250,000. Now heading to the House.

- Colorado’s AI Act: Enforcement began February 1, 2026, requiring risk assessments for high-risk AI systems.

- EU AI Act Article 50: Taking effect August 2026, it will require all AI-generated content to carry machine-readable metadata marking it as synthetic.

- State-level surge: 46 states have now enacted some form of deepfake legislation, with 146 bills introduced in 2025 alone.

Meanwhile, the Grok deepfake controversy continues to reverberate — xAI’s lax content filters allowed users to generate explicit images of real people, triggering investigations in multiple countries and lawsuits from high-profile victims.

Why it matters: The regulatory environment is catching up to the technology — fast. Content creators and platforms working with AI video need to stay ahead of disclosure requirements, watermarking standards (C2PA is emerging as the industry standard), and liability frameworks. If you’re producing AI video commercially, compliance is no longer optional.

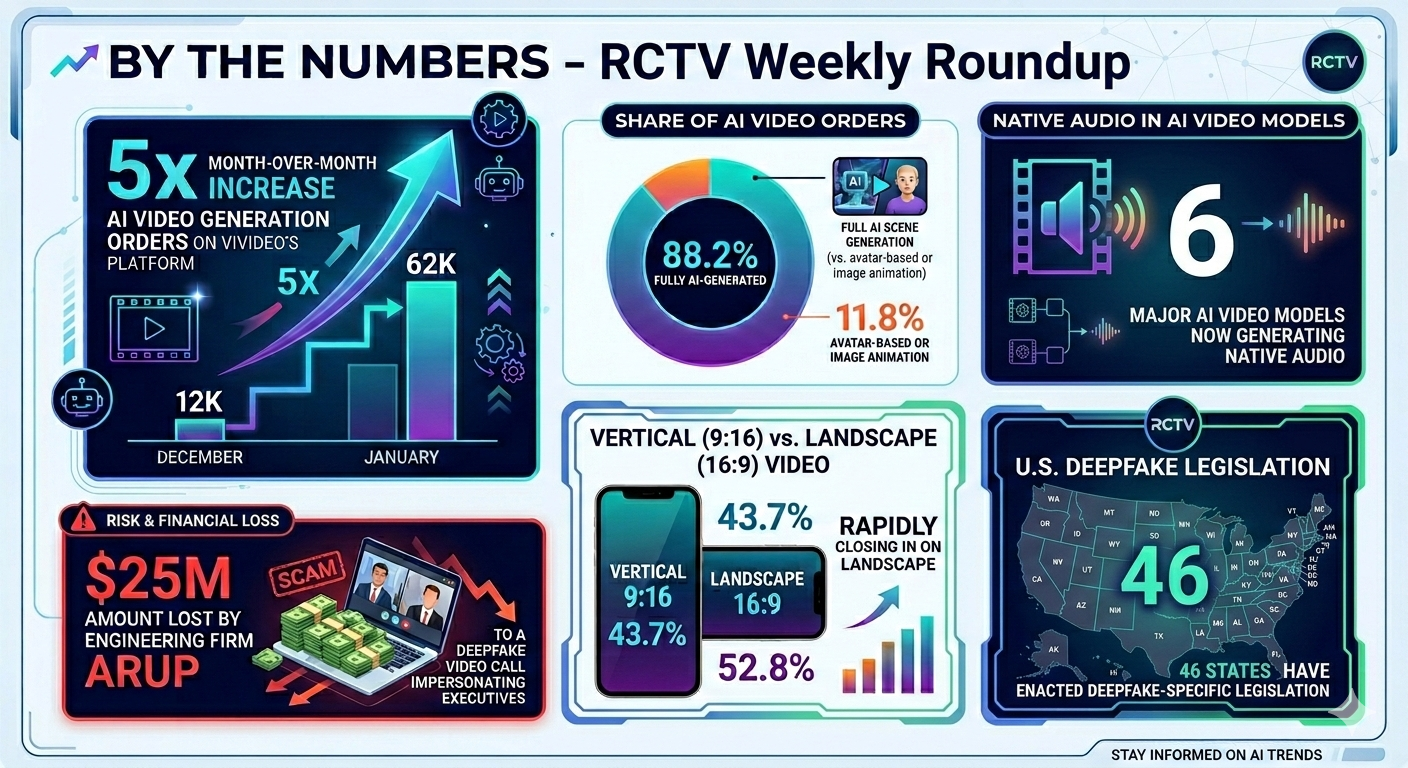

📈 By the Numbers

- 5× — Month-over-month increase in AI video generation orders on Vivideo’s platform (12K in December → 62K in January)

- 88.2% — Share of AI video orders that are fully AI-generated (vs. avatar-based or image animation)

- 43.7% — Vertical (9:16) video’s share of AI generations, rapidly closing in on landscape (52.8%)

- $25M — Amount lost by engineering firm Arup to a deepfake video call impersonating executives

- 46 — U.S. states that have enacted deepfake-specific legislation

- 6 — Major AI video models now generating native audio

🔮 What to Watch Next Week

- Seedance 2.0 access: ByteDance is rolling out broader availability — watch for hands-on reviews from professional creators

- RCTV will be tracking the downstream effects of the DEFIANCE Act as it moves to the House

- ICLR 2026 (April) will feature EPFL’s breakthrough on eliminating video drift — a foundational advance that could unlock unlimited-length AI video

RCTV’s AI Video Weekly Roundup is published every Friday. Subscribe to stay ahead of the curve.