OpenAI’s consumer Sora app went dark on Sunday — the executed end of an arc announced 33 days ago. The same week, Adobe quietly turned Firefly into a 30-model aggregation hub for video, Tencent shipped an open-source 3D world model to match Alibaba’s Happy Oyster from two weeks back, and a Johannesburg agency proved Luma Agents can carry a national auto commercial. The week’s headline isn’t a new model — it’s that the platform layer is now where AI video is being decided.

Models covered: Sora · Kling · Veo · Runway · Hunyuan · Luma · Grok

🌑 Sora’s Consumer App Went Dark on Sunday

OpenAI shut down the Sora consumer app on Sunday, April 26, executing the discontinuation announced March 24. The web interface for browsing community generations is gone. The standalone iOS app is gone. The export window for personal generations closed with the shutdown.

The Sora API remains live until September 24, 2026 — a five-month runway for any developer who built on top of the model to migrate. After that, the entire Sora line retires. OpenAI’s stated reason for pulling the consumer product was a strategic refocus on world simulation for robotics. We unpacked that framing in detail on April 20; the short version is that OpenAI is conceding the open creator market to Kling, Veo, Runway, and the rest, while reserving Sora’s underlying tech for vertical applications.

The pulse log we ran daily through this week confirms what the announcement implied: no surprise reversal, no extension, no last-minute consumer-tier replacement. OpenAI is exiting the AI video front end. The API remains as a wind-down accommodation, not a continued bet.

Why it matters: A year ago, Sora was the model everyone benchmarked against. Today, OpenAI is the major lab without a consumer AI video product — and the market did not collapse around its absence. The headline lesson is that distribution and tooling now matter more than the model itself. The labs that own user surfaces (Adobe, Google, Kuaishou, ByteDance) are eating the labs that don’t.

🧰 Adobe Firefly Becomes the Aggregation Layer for AI Video

On April 15, Adobe extended Firefly into a multi-model AI video hub hosting more than 30 third-party AI models. The roster now includes Kling 3.0 and Kling 3.0 Omni alongside Google’s Veo 3.1 and Nano Banana 2, Runway’s Gen-4.5, ElevenLabs’ Multilingual v2, and additional models from Luma AI, Black Forest Labs, and Topaz Labs.

Adobe paired the model expansion with the launch of Firefly AI Assistant, an agent layer that orchestrates multi-step workflows across Photoshop, Premiere, Lightroom, Express, and Illustrator from a single natural-language prompt. The pitch: a creator describes the outcome, and Firefly routes the task across whichever combination of models and Creative Cloud apps fits best.

Kling 3.0 Omni’s addition is particularly notable — it brings shot duration, camera angle, and character movement controls across multi-shot sequences directly into Adobe’s pipeline. Kling 3.0’s native 4K at 60fps, which shipped in February, now reaches every Firefly user without a separate Kuaishou subscription.

Why it matters: Adobe has the audience that AI video labs want and don’t have — millions of paying creative professionals already in the daily workflow. By aggregating Kling, Veo, Runway, Luma, and the rest, Adobe positions itself as the meta-platform: the place where the “which model is best this week” question becomes a routing decision instead of a subscription choice. This is the same playbook the App Store ran on mobile distribution. Model labs that want consumer reach increasingly need a deal with Adobe to get there.

🌍 Tencent Open-Sources HY-World 2.0, Matching Alibaba’s 3D Worlds Push

Tencent’s Hunyuan team released HY-World 2.0 on April 16, an open-source multi-modal world model that generates editable 3D scenes — meshes plus Gaussian Splattings — from text prompts or single reference images. The accompanying WorldMirror 2.0 inference code and weights are also open-sourced, giving the local-deployment community a complete pipeline for 3D scene generation.

This lands just over a week after Alibaba’s Happy Oyster — also a 3D world model, also from a Chinese lab, but closed-access. Together, they signal that the next AI video frontier isn’t longer or higher-resolution clips; it’s interactive, navigable 3D environments. Tencent’s choice to open-source the full stack — including weights — is the more aggressive bet, and it puts immediate competitive pressure on Alibaba’s still-gated Happy Oyster early access.

The technical distinction matters: HY-World 2.0 produces editable geometry (meshes + splats), which means the output drops into existing 3D pipelines for game engines, VR, and traditional rendering. Happy Oyster’s Directing and Wandering modes are designed for real-time exploration but don’t expose the underlying 3D representation in the same standards-friendly way.

Why it matters: Two of China’s largest AI labs shipping open-source-or-near-it 3D world models within two weeks isn’t coincidence — it’s a coordinated frontier shift. Western labs (Runway, Luma, Pika) have nothing comparable in production. If the pattern OpenAI cited as Sora’s shutdown justification — that world simulation matters more than clip generation — turns out to be correct, the Chinese labs already have a 6-to-12-month head start on shipping it.

🚗 Luma Agents Carry Mazda’s First AI-Produced Commercial

Boundless, a Johannesburg creative agency, delivered Mazda’s first AI-produced commercial using Luma Agents in under two weeks of production time. The spot replaces a traditional shoot — location scouting, crew, equipment, post — with an agent-orchestrated workflow that handled scripting, generation, and editing in a fraction of the budget and timeline a comparable live-action ad would have required.

This continues a thread RCTV has been tracking: Luma is converting its agent platform from demo to working production infrastructure. Wonder Project’s Innovative Dreams launch two weeks ago put Luma Agents on professional film sets with AWS backing; the Boundless work puts the same toolchain in front of a Tier 1 automotive client at agency timelines. Different surface, same substrate.

The Boundless turnaround also clarifies what “AI-native” production actually looks like in 2026: the agency still owns the brief, the brand strategy, and the final cut. Luma Agents handle the labor of generation. The split is closer to a video editor’s relationship with Premiere than to “the AI made an ad.”

Why it matters: Mazda is the kind of brand whose legal and brand-safety review will not approve a production process they don’t trust. That a national auto spot ran through Luma Agents — and shipped — is the most credible production-deployment signal we’ve seen for any AI video platform this year. When name-brand creative shops adopt the toolchain, the production-speed argument stops being theoretical.

👁️ xAI Adds Native Video Understanding to Grok 4.3 Beta

On April 17, xAI released Grok 4.3 Beta with native video understanding, letting Grok analyze video as a coherent temporal sequence rather than as isolated frames. This is distinct from Grok Imagine — xAI’s video generation product — but the two now stack: Grok can both generate video and reason about video content within the same model family.

Combined with the Grok Imagine Pro 1080p tier teased for late April, xAI is positioning Grok as the only major model line offering native generation, native understanding, and X-platform-scale distribution under a single subscription. No other lab has that vertical integration today — OpenAI shed its consumer video product on Sunday, Google’s Veo and Gemini live in different surfaces, and Adobe’s Firefly is an aggregator rather than a single-model stack.

The video understanding capability matters most for agentic workflows: a Grok agent can now watch a video, summarize it, fact-check claims against the visual content, or use it as input to a generation task without a separate vision model in the loop. For the creator pipeline, that’s the missing primitive between “generate a clip” and “iterate on a clip.”

Why it matters: Vertical integration is the one card OpenAI just folded. xAI is doubling down on it instead. If Grok Imagine Pro ships with credible 1080p output and the understanding model holds up under load, xAI will have the cleanest end-to-end AI video experience of any lab, with built-in distribution to a half-billion-MAU social network. That’s a different competitive shape than the model-as-product play Sora ran.

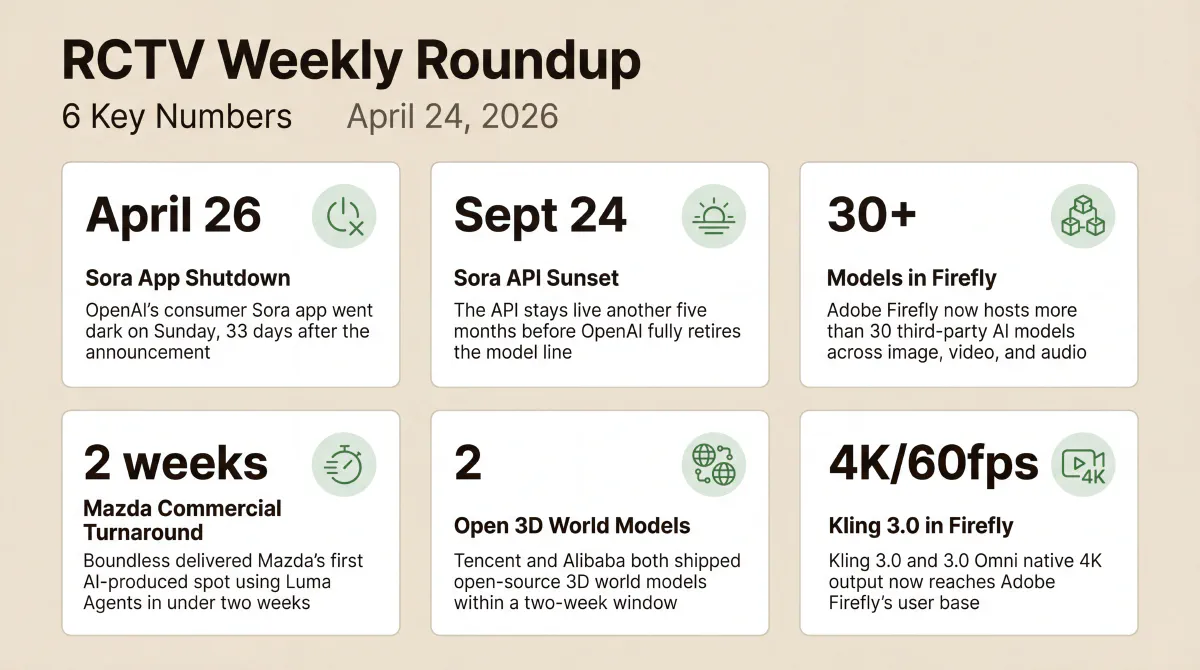

📈 By the Numbers

- April 26 — Sora’s consumer app shutdown executed on schedule, four weeks after announcement

- September 24 — Sora API sunset date; the model line fully retires after that

- 30+ — Third-party AI models now in Adobe Firefly’s video and creative hub

- 2 weeks — Boundless agency’s turnaround for Mazda’s first AI-produced commercial via Luma Agents

- 2 — Open-source 3D world models from major Chinese labs in 14 days (Happy Oyster, then HY-World 2.0)

- 4K / 60fps — Kling 3.0’s native output resolution and frame rate, now reaching Firefly users without a Kuaishou subscription

🔮 What to Watch Next Week

- HappyHorse weights release — Alibaba’s frontier video model has been listed as “weights coming soon” since April 10. Any open release immediately reshapes the local-generation landscape

- Grok Imagine Pro launch — Musk said “later this month.” If 1080p ships in the next seven days at anywhere near current Grok Imagine pricing, Adobe’s aggregation play gets a new must-include model

- Pika Labs activity — The Pika blog has been dark since PikaStream 1.0 on April 2. Pika has been one of the loudest 2024–2025 brands; its silence through April is starting to look like more than a content lull

- TAKE IT DOWN Act compliance — The May 19 platform compliance deadline is 22 days out, and the April 8 first federal criminal conviction gave the act its first enforcement precedent. We’re tracking platform-side policy responses and deferring deeper analysis until post-deadline implementation patterns surface; expect compliance announcements from major hosts as the date approaches

For full specs, pricing, and access details on every model covered this week, see the AI Video Stack 2026 reference page — updated every Monday.