The happyhorse.me/open-source landing page says what it says: “Happy Horse 1.0 is Open Source.” The page goes further. It describes base weights, a distilled model, a super-resolution module, and inference code as “publicly available on GitHub under a permissive license.”

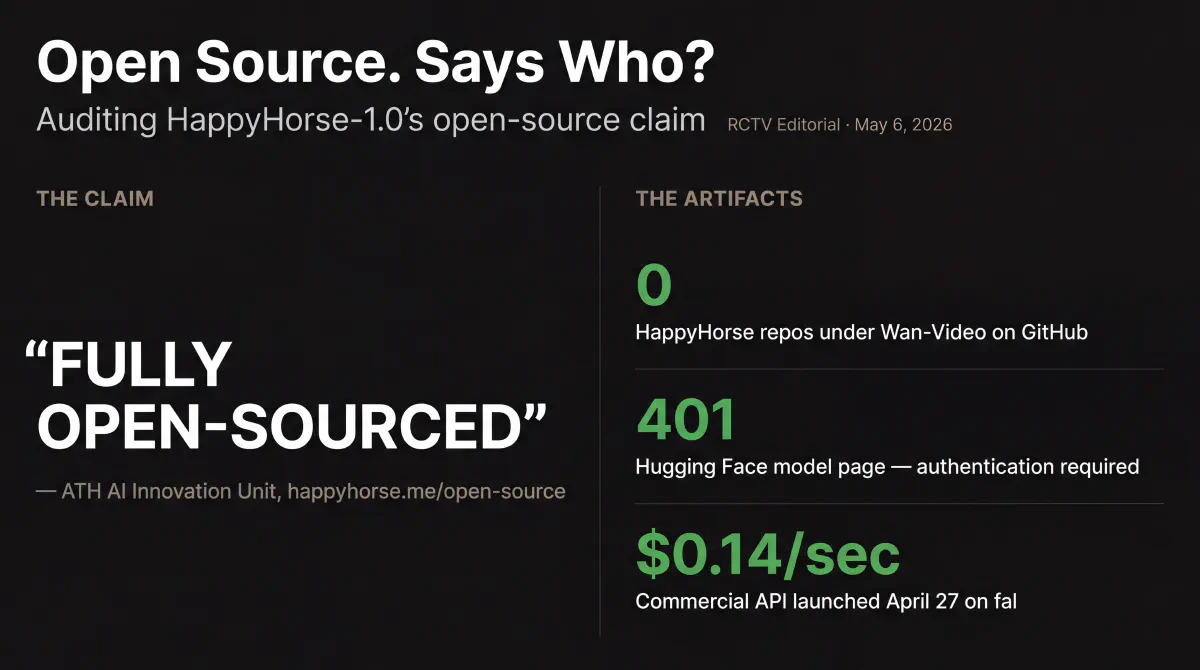

Go to GitHub. The canonical Wan-Video organization — Alibaba’s official repository home for its video model work — carries four repos: Wan2.1, Wan2.2, a diffusers fork, and a Wan-skills agent toolkit. There is no HappyHorse-1.0 repository. Not a placeholder. Not an empty shell. Nothing.

Go to Hugging Face. The happyhorse-ai/happyhorse-1.0 model page exists. It returns a 401. That means the model card is real and access is gated — you need the model owner’s permission to see what’s inside. “Publicly available” and “requires authentication to access” are not the same thing.

This is the gap the May 4 roundup named but didn’t have room to dissect. So here’s the dissection.

What’s actually in market

On April 27, fal launched HappyHorse-1.0 as its official API partner. Four endpoints: text-to-video, image-to-video, reference-to-video, video-edit. Pricing at $0.14 per second for 720p and $0.28 per second for 1080p — pay-per-second, no minimums. Developers can call it today.

The model backing those endpoints is technically impressive. Fal’s documentation describes a 15-billion-parameter unified 40-layer self-attention Transformer that generates video and audio jointly in a single forward pass with no cross-attention modules — no separate audio post-processing, no Foley layer stapled on afterward. Native lip-sync in seven languages: English, Mandarin, Cantonese, Japanese, Korean, German, and French. Inference runs roughly 38 seconds for a 1080p clip on a single H100.

On the same day, Alibaba Cloud Bailian opened enterprise-grade access for its own customers, with full commercialization queued for May. Pixazo added a third API distribution path on April 29.

The commercial surface is live. The open-weight surface is not.

What the leaderboard position means here

HappyHorse’s Elo 1,354 on the Artificial Analysis text-to-video leaderboard — captured May 3, 83 points ahead of Dreamina Seedance 2.0 in second place — is the score that made the open-source claim matter. Models top leaderboards all the time. Most of them don’t claim open weights.

When a model sits at #1 and markets itself as open source, that combination creates a specific kind of expectation in the developer community: this is the model we can run locally, tune, integrate on our own infrastructure, and not pay API pricing for. That expectation is what the open-source marketing claim sets up. The gap between that expectation and the artifact state is what the leaderboard position amplifies.

The audio leaderboard complicates the picture in a different direction. On the text-to-video-with-audio ranking, HappyHorse sits at Elo 1,218 — two points behind Dreamina Seedance 2.0 at 1,220, a near-inversion of the no-audio result. For shots where audio is part of the brief, the gap closes to statistical noise. The silent-video dominance is genuine; the audio story is a near-tie.

A stealth reveal on April 7 with Elo 1,333. Alibaba unmasked as the creator on April 10. A commercial API launch on April 27. Twenty days from pseudonymous reveal to commercial access, with “fully open-sourced” as a consistent part of the marketing narrative throughout — and no public weights at any point in that arc.

The verification trail

WaveSpeedAI ran the check on April 8 — one day after HappyHorse entered the arena. At that point: no GitHub repository, no Hugging Face model card, no weights, no inference code, no license file. The conclusion was direct: HappyHorse fell into the “open access” category (demo and API), not true open source (downloadable, inspectable, locally runnable).

Twenty-five days later, the happyhorse.me/open-source page now claims the weights are published. But the Wan-Video GitHub org — the organization where Wan2.1 and Wan2.2 live, the natural home for any official HappyHorse release — still has no HappyHorse repository. The Hugging Face model page requires authentication. A third-party fan repo (CalvintheBear/HappyHorse-1.0) exists on GitHub — the one a developer searching for HappyHorse weights would land on first — and it carries no releases, no weights, and no ATH affiliation.

There is no architecture paper on ArXiv. For a model claiming 15 billion parameters and a novel joint audio-video generation approach, that absence matters. The fal.ai model page is the most detailed technical description in public — a commercial partner’s product page, not a research artifact. A 15B-parameter unified Transformer with joint audio-video generation and no cross-attention is a specific and testable claim. Testing requires access to the weights. That’s the marketing’s word against the artifact.

What this means for the credibility test

The marketing language and the artifact state are not aligned, and the mismatch has now persisted for nearly four weeks.

There are two distinct RCTV reads, both compatible with the facts. The generous read: ATH separated the commercial API timeline (move fast, capture market share, let fal own developer access) from the open-weight timeline (more complex, still coming), and the marketing surface didn’t track that separation cleanly. The less generous read: “open source” was the marketing claim that drove three weeks of leaderboard coverage and community interest, and the artifact hasn’t caught up.

Neither requires speculating about strategy. Both are consistent with what the sources show.

The credibility test is about what “open source” means when a lab says it. If weights ship in May, the damage is bounded: a slow release, marketing that ran ahead. If May closes without public weights, the claim becomes the kind of thing that follows a model through its lifecycle — and that’s more serious than a missed deadline, because it goes to whether you can trust the technical claims about the model itself.

The closure test is concrete: weights live under the Wan-Video GitHub org or the happyhorse-ai Hugging Face org, with a working license and inference code. Until then, the claim is open. If you’re evaluating HappyHorse-1.0 for local deployment today, fal is the actual access surface. The API is good. It’s just not what “fully open-sourced” implies.

We’re tracking it. When the artifact ships, we’ll say so.

HappyHorse-1.0 has appeared in every RCTV roundup since its April 7 debut. For full spec history and prior coverage, see the April 17, April 27, and May 4 roundups.