“A woman in her twenties, wavy brown hair, walking through a rain-slicked Tokyo street at 2am. Low-angle wide shot. Neon reflections on wet asphalt. Cinematic.”

“Subject: woman, confident stride. Style: film noir. Lighting: hard rim light from the left, neon signs bleeding color. Camera: slow dolly back. Constraints: no motion blur on face.”

“Character: woman, early 30s, sharp coat. Mood: tense, deliberate. Scene: empty downtown street, 2am, light rain. Shot: tracking follow at medium distance. Finish: shallow depth of field, cool grade.”

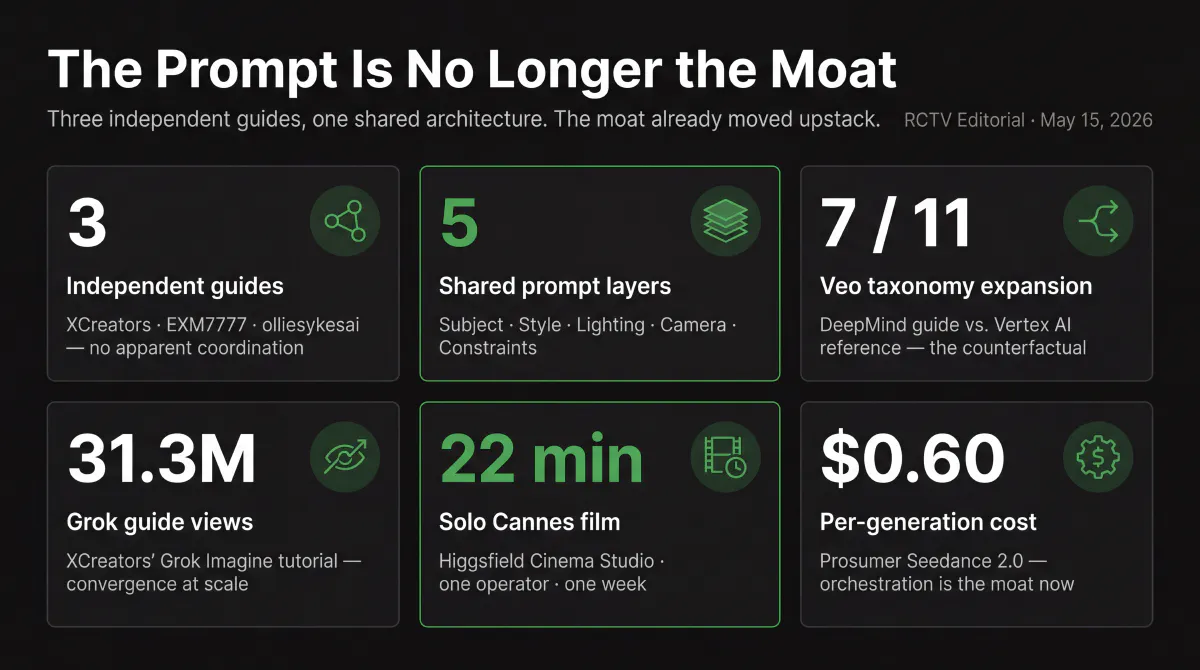

Three different AI video tools. Three independent prompting guides. Read them side by side and the structure is identical — subject, style/mood, lighting, camera, finish — even though the authors never collaborated, the models run on different infrastructure, and the keyword libraries differ at every layer.

This is either a coincidence or a signal. If it’s a signal, the competitive moat in AI video has already moved somewhere else.

The Five Layers

Three vendor sources, same architecture. The keywords differ; the slots don’t.

| Layer | Grok Imagine (xAI/XCreators) | Seedance 2.0 (EXM7777/Volcengine) | Operator stack (olliesykesai) |

|---|---|---|---|

| Subject | Who or what; descriptive detail | Subject: specific visual features of who/what | Character build: age, look, wardrobe |

| Style / Mood | “Blade Runner mood” or “Studio Ghibli feel”; reference-based | Style: visual references and tone | Mood: genre and emotional register |

| Lighting | Named: “golden hour backlight,” “hard rim light from the left” | Environment includes lighting; “lighting descriptions have the single biggest impact on video quality” (per Volcengine docs) | Scene: location with lighting and atmosphere |

| Camera | Named angle + movement: “slow dolly in,” “pan right,” “static wide” | Camera: single primary movement; sequenced, not stacked | Shot: angle and distance; tracking instructions |

| Constraints / Finish | “Keep compositions focused”; finishing details for style lock | Constraints: problems to exclude — jitter, distortion | Finish: grade, depth of field, final polish |

Sources: XCreators X-Article (captured 2026-03-31); EXM7777 X-Article sourcing Volcengine official documentation (captured 2026-05-10); olliesykesai operator workflow (captured 2026-04-06).

For contrast: Google’s official Veo documentation lists seven discrete components on its DeepMind guide and eleven categories in its Vertex AI developer reference — shot framing, style, lighting, character, location, action, dialogue, plus camera movements, lens effects, temporal elements, audio, and negative prompts as distinct slots. Google’s taxonomy is more expansive, not convergent. That expansiveness makes the five-slot compression more interesting, not less — three vendors arrived at the same compression without following Google’s model.

Argument 1 — The Convergence Is Real Within the Documented Set

Three independently authored guides. Non-overlapping authors.

XCreators is xAI’s official first-party creator account — the canonical Grok Imagine tutorial surface, with 31.3 million views on the guide in question. @EXM7777 is an independent creator whose Seedance 2.0 prompting guide draws explicitly on Volcengine’s own engineering documentation. olliesykesai is an operator describing his own production workflow — Sora 2 Pro for audio-sync, Nano Banana 2 for character building, Kling 3.0 for animation, FreePik Spaces for orchestration — not citing a prompting guide at all.

None of these authors appear to have read each other. XCreators is writing for the xAI ecosystem. EXM7777 is synthesizing ByteDance’s official Volcengine docs with empirical testing across hundreds of generations. olliesykesai is documenting what works in a live production stack. The convergence is in the architecture, not in the vocabulary.

That distinction matters. The lighting keywords are different. EXM7777’s camera terminology doesn’t match XCreators’. The olliesykesai stack phrases constraints as production polish, not a fifth layer. But the slots exist in all three. Subject gets its own slot. Lighting gets its own slot. Camera gets its own slot. That’s not semantic coincidence — it’s structural.

Google’s Veo taxonomy demonstrates the counterfactual. Where Google has engineering resources to document everything, the taxonomy expands: seven components in the DeepMind consumer guide, eleven in the Vertex AI developer reference. The five-slot compression is a choice — or a convergence — not an inevitability. The three-vendor set arrived at the same choice independently.

Argument 2 — Why This Is Likely Structural

This is the hypothesis, not the conclusion. But the hypothesis has teeth.

Video has compositional axes that text and image share partially, but don’t share completely. Text has no camera. Image has camera angle but no camera motion. Video requires both — angle, movement, continuity over time — and adds lighting-over-time (a sunset shot behaves differently than a static lighting description) and motion constraints (jitter, drift, smear are video-specific failure modes without image equivalents).

If those axes have to be addressed somewhere in the prompt — and the empirical evidence from all three sources suggests they do — then the question is how many slots fit before the prompt becomes a production spec document rather than a creative instruction. Five is approximately what fits in a creator workflow. Ten is a cinematography textbook. Seven is a developer reference for people building production pipelines.

The three vendors that converged on five all have one thing in common: their guides are written for creators, not for engineers. XCreators is writing for social-media video makers. EXM7777 is writing for prosumer operators paying $0.60 per generation. olliesykesai is describing a stack for AI-generated user content at production scale, not a studio workflow.

That’s a selection effect worth naming. We may be observing convergence among creator-workflow documentation, not across AI video prompting as a whole. If that’s the boundary, the claim should respect it: “five-slot compression is the stable taxonomy for creator-workflow AI video prompting, across independently documented sources.” Not “every AI video model converges on five layers.”

One other explanation deserves mention: these five slots may reflect what the current generation of video models actually responds to reliably, not what they theoretically accept. EXM7777 makes this explicit — the Seedance guide was built from hundreds of generations and validated against what the model actually does, not what Volcengine’s spec says it should do.

Either way — structural convergence or empirical convergence — the result is the same. The five-slot prompt is now the baseline. Not a proprietary insight. Not a competitive advantage. The table stakes.

Argument 3 — Where the Moat Moves

If the prompt is table stakes, value lives in three other places.

Workflow orchestration. The olliesykesai stack isn’t competing on prompt quality. It’s competing on the ability to assemble, iterate, and control multi-shot sequences at production speed. Nano Banana 2 builds consistent characters. Kling 3.0 animates them. FreePik Spaces orchestrates the pipeline. The prompt is one input to a production system. The system is the margin — the ability to generate at volume, maintain character consistency across shots, and iterate in real time when a client’s creative direction changes at 11pm. A better prompt guide doesn’t help you there.

This is the architectural insight from the XCreators guide that usually gets missed: “Use Grok Projects for recurring creation of storyboards based on your ideas. Storyboard → shot list → individual generations → chain via Extend Video.” The guide describes a workflow, not just a prompt. The prompt is the unit. The workflow is the product.

Framework-level abstraction. HeyGen’s Hyperframes makes a different bet. The framework — Apache 2.0, built for AI agents — doesn’t accept subject/style/lighting/camera/constraints. It accepts HTML. The composition language is HTML/CSS/JS with a GSAP timeline; the agent writes code, the framework renders video. Hyperframes maps natural-language adjectives to technical settings: smooth maps to power2.out, snappy maps to power4.out, bouncy maps to back.out. The vocabulary table is the interface design — around thirty natural-language descriptors that compile to framework-level settings, instead of a creative brief that the model interprets each time.

Hyperframes is the exception that proves the rule. Operating one layer above where the five-slot convergence happened, it bypasses the prompt layer entirely. Instead of a better video prompt, HeyGen shipped a declarative composition spec where the agent is the author and the renderer is deterministic. The output is reproducible. The composition is versionable. The prompt, in the conventional sense, is gone.

Tier-specific positioning. Sora’s shutdown established the framework: consumer AI video subscriptions are structurally broken on cost versus retention. The viable path is professional infrastructure — priced per output, built for production repeatability, with unit economics that survive at scale. Higgsfield Cinema Studio is the clearest current evidence: a Cannes-winning director shot a 22-minute film solo in one week. That’s not a feat of prompt engineering. It’s a feat of platform positioning — a tool built for filmmakers, not for consumer app users, occupying a tier that professional infrastructure can survive.

The prompt taxonomy commoditized at the creator tier. At the Cinema Studio tier, the question isn’t which five slots to fill. It’s whether the platform can maintain shot continuity across a 22-minute runtime with a single operator. Those are different problems with different moats.

Primary-source documentation from the vendors themselves now covers most of what creator-workflow prompting courses charge for. The XCreators guide is free and has 31 million views. The EXM7777 guide is public. This isn’t an argument against structured learning — it’s an observation that the reference material has arrived at the primary level.

Resolution

Three things are now true.

The five-layer prompt structure — subject, style/mood, lighting, camera, constraints — has emerged across independently authored guides from non-overlapping sources. It’s the baseline for creator-workflow AI video, not the differentiator.

Value in AI video has moved upstack. The workflow layer (multi-shot orchestration, character consistency, production speed) is where operator advantage compounds. The framework layer (Hyperframes’ declarative composition spec) represents a different bet: make the prompt unnecessary by replacing it with a more expressive composition language. The tier layer (professional infrastructure vs. consumer subscription) remains the structural frame the Sora shutdown established.

Here is the falsifiable prediction: the next AI video framework that reaches significant adoption will operate at the Hyperframes layer — HTML/CSS/JS or an equivalent declarative spec — not at the prompt layer. Check back in twelve months.

For current specs, pricing, and benchmark rankings across all active AI video models, see the AI Video Stack 2026 reference page — updated every Monday.